Initialize the active learner with your data and, optionally,ĭata_1 ( Data) – Dictionary of records from first dataset, where the

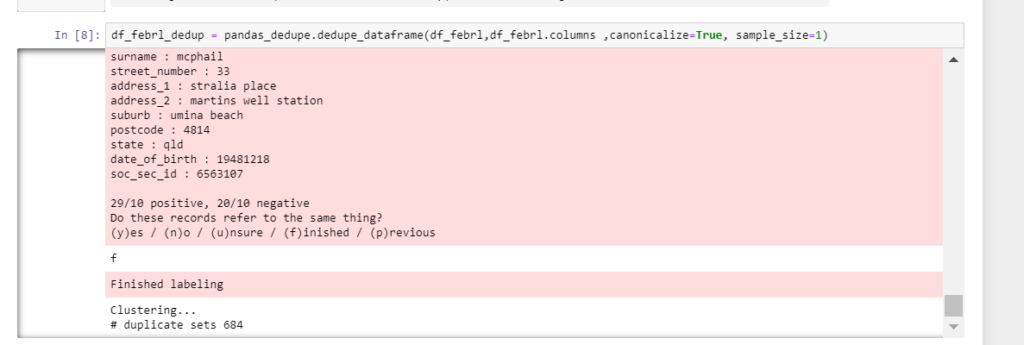

RecordLink ( variables ) prepare_training ( data_1, data_2, training_file = None, sample_size = 1500, blocked_proportion = 0.9 ) # initialize from a defined set of fields variables = deduper = dedupe. With open ( 'learned_settings', 'rb' ) as f : matcher = StaticDedupe ( f ) partition ( data, threshold = 0.5 ) Lowering the number will increase recall, The cluster is greater than the threshold. Will only consider put together records into Parametersĭata – Dictionary of records, where the keys are record_idsĪnd the values are dictionaries with the keys being Own pairs of records and feed them to score(). This method should only used for small to moderately sizedĭatasets for larger data, you need may need to generate your Record_ids within each set should refer to the same entity and theĬonfidence score is a measure of our confidence a particular entityįor details on the confidence score, see (). Sequence of confidence score as a float between 0 and 1. Tuples containing a sequence of record ids and corresponding Identifies records that all refer to the same entity, returns write_settings ( f ) cleanup_training ( ) Ĭlean up data we used for training. > with open ( 'learned_settings', 'wb' ) as f : > matcher. Write a JSON file that contains labeled examples Parametersįile_obj ( TextIO) – file object to write training data to Index_predicates ( bool) – Should dedupe consider predicates Recall should be a float between 0.0 and 1.0. If we lower the recall, there willīe pairs of true dupes that we will never Training data that that the learned fingerprinting Requires thatĪdequate training data has been already been provided.

Learn final pairwise classifier and fingerprinting rules. train ( recall = 1.0, index_predicates = True ) The data or training file supplied to the However, you must ensure that every record thatĪppears in the labeled_pairs argument appears in either Mark_pairs() to train a linker with existing data. If that is not possible or desirable, you can use If you have existing training data, you should likelyįormat the data into the right form and supply the trainingĭata to the prepare_training() method with the Uncertain_pairs() to incrementally build a training In parallel to music will be expanding my Calibre/Jellyfin/LazyLibrary aspect for my ebooks.Mark_pairs() is primarily designed to be used with I'm new over on the music side (Sonarr/Radarr/Sabnzbd/Jellyfin/Plex/TinyMediaManager/Kodi on the video side). The Media Monkey UI may be a bit more complicated (in my short comparison - Picard looked easier to use), and while using Media Monkey as a player may suck compared to many other clients, with those known (or other?) potential caveats, would it be better/worse or essentially just an equivalent alternative to Picard for initial tagging, scraping some metadata and then overall management? Yet, I believe Media Monkey uses the MusicBrainz provided ID method so I believe it can do the same, but perhaps more? And maybe also can be a player itself and/or server for remote client access (versus Plex/Jellyfin), albeit perhaps not as good as those or Navidrome? My understanding is that Picard is essentially taking it from the source (MusicBrainz) so may as well use their tool, and it's straightforward to use on the GUI. Some initial automation (like it sounds Picard can provide) is great, but then I prefer UI oversight and managing individual or bulk changes via a web UI, if possible. Would you recommend Picard versus a tool like Media Monkey?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed